Henri Hambartsumyan

Administrating a cloud environment in any organization bigger than a startup is hard, very hard. There are so many aspects to it, that it’s impossible for a single person or team to oversee. From access control to workload management, from billing to governance – there is just too much. Hence, it’s no surprise that most organizations have configurations in their cloud with unintended side effects (i.e., misconfigurations). Some side effects only have a small impact on your security posture. Others can be a fatal flaw in your defense against threat actors. But any real-life production environment has these misconfigurations. Even worse, many of the misconfigurations are hard to spot (if you can identify them at all). This is specifically true for Azure, using the Azure portal.

This is where tools like AzureHound come in handy. The amazing team behind BloodHound Enterprise has created the awesome BloodHound and AzureHound tools which have been extremely influential since their release, both for offensive as well as defensive security.

This blog post is aimed at defensive security professionals who are planning to harness the power of BloodHound and AzureHound to increase their security posture. We’ll cover the following topics:

- The basics of running AzureHound.

- Automating AzureHound collection using the Azure cloud.

- Skeleton queries to identify potential attack paths.

As part of this blog post, we will also share a modified version of AzureHound to make ingestion more efficient, as well as some code to automatically deploy AzureHound to your Azure cloud.

The basics of running AzureHound

The easiest way, by far, to run AzureHound is by using the device authentication flow which is outlined in the AzureHound documentation. Follow this guide to obtain a refresh token and use that to run AzureHound on a machine which doesn’t have security tooling(e.g. AV/EDR) installed.

Keep the following things in mind when running AzureHound.

- Run AzureHound on a machine which doesn’t have the company security tools installed.

- Run AzureHound with a service principal instead of a “normal” user account. This seems to work better, as there seems to be less throttling for service principals performing API requests compared to normal users. Throttling might be an issue depending on the size and complexity of your Azure environment.

- If you’re running AzureHound for the first time, feel free to run and test it with a normal account.

- The account you use needs to have the following permissions:

- “Global reader” role to access all AzureAD (AAD) related configurations.

- “Reader” on all subscriptions or management groups.

- Keep an eye out for the error messages in the console output. AzureHound is very “forgiving” – it continues on a lot of errors, but at the same time produces results which are not necessarily complete.

- Ensure the machine you’re running AzureHound on has sufficient RAM and disk space. During collection, RAM usage is fairly steady, but usage explodes at some point towards the end of the collection. I experienced that for one environment I was assessing, 20GB of RAM wasn’t enough to collect. Granted, the output JSON files where +/- 50GB. You can mitigate this RAM / disk space challenge on Linux VMs by configuring a large swap partition.

- Be patient with importing the results into BloodHound. For large environments, the import gets stuck on “Post processing”. Just leave it running; it gets there eventually.

- Ensure your Neo4J version is 4.X not 5.X, as the documentation also states. There is significant performance degradation in Neo4J 5.x when combined with BloodHound.

Once you’ve collected your data and are ready to go, look for the queries towards the end of this blog post and from ZepherFish on github.

Automating AzureHound collection

Even though a one-off assessment of your Azure environment is already useful, the real power comes whenever you automate collection (and potentially analysis). Automatically collecting this information ensures you’re looking at the most current data. This is something which BloodHound Enterprise (BHE) does for you. So if you’re looking for an easy solution, consider buying BHE. If for whatever reason that’s not an option for you (yet), continue reading.

After some experimenting, I figured out that the easiest way to run AzureHound periodically, is to use either an Azure container or an Azure VM, depending on the size of your environment. Both of them can and should be combined with a system-assigned managed identity.

The currently supported approach and its drawbacks

Using a system-assigned managed identity allows you to use an Azure identity without having credentials stored. This is a neat feature, but AzureHound doesn’t support it out of the box. The most straightforward way of using system-assigned managed identities on the current version of AzureHound (v2.0.0) is by using the Azure CLI inside the container or VM to obtain an access token. This can be done in the following way:

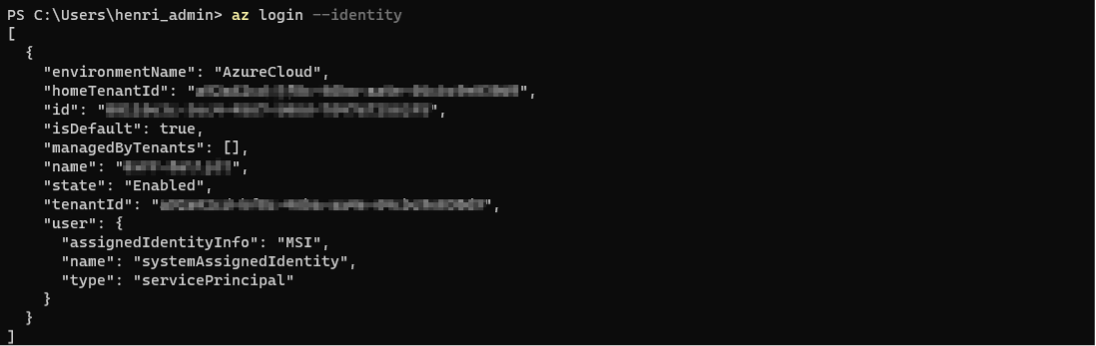

az login --identity

az account get-access-token --resource=https://graph.microsoft.com --query accessToken -o tsv

Next, use the output of the last command as the value for the “-j” flag of AzureHound. Make sure that the resource is exactly as above. If you have an additional trailing slash, it will render your token unusable.

Moreover, you have to go through the above steps twice. Once with a token for the graph API (graph.microsoft.com) to get AAD data, and once time for the Azure API (management.azure.com) to get Azure resource data.

On a Windows VM, this would look something like this:

Besides the annoyance of fiddling around with tokens and building plumbing for it, there is another issue with this approach. Since we’re using access tokens, they have a limited lifetime (a bit more than an hour). So, if you have a large environment, your token will expire during collection.

The unsupported but convenient approach

All this plumbing for fetching tokens and the expiry issue in large environments is not ideal for large-scale deployments. AzureHound can already log in using an existing service principal. So, the step to using a system-assigned managed identity instead of credentials for a service principal feels small. After a few hours of coding and debugging, it was indeed a relatively small change.

I’ve added a new command-line option to AzureHound to authenticate using a system-assigned managed identity natively. This has the following added benefits:

- No more manual fiddling around with access tokens and the Azure CLI.

- Expired access tokens get automatically refreshed by the tool.

The code is available at https://github.com/0xffhh/AzureHound/tree/hh-fork/feat-az-managed-id. It’s a quick-and-dirty fork, and there is a PR outstanding to merge this functionality into AzureHound. Once it’s there, you can use the code from the official repository.

Now we have the use of a system-assigned managed identity resolved, the next step is to automate the whole collection flow. For this purpose, we’ve published AzureHoundAutoCollect. This container is also published on Dockerhub.

If you want to build everything from scratch, you can build the Docker container and publish it to your desired location.

Next, we need a storage account/container to store the results of the periodic AzureHound scan. Create or designate a blob storage for this purpose. Now, we can use the Azure CLI to create a container instance. I’m assuming that you’re using the container I published; otherwise, you have to modify the location of the container.

az container create --name azurehound --resource-group {YOUR RG NAME} --image 0xffhh/azurehound --restart-policy Never --environment-variables AZ_STORAGE_ACCOUNT={YOUR STORAGE ACCOUNT NAME} AZ_STORAGE_CONTAINER={YOUR STORAGE CONTAINER NAME} --cpu 4 --memory 8 --assign-identity '[system]'

This will create and try to start your container. It will fail the first time due to a lack of permissions for the system-assigned managed identity. The next step is to assign those permissions. The identity is going to need 3 permissions:

- Global Reader role on Azure AD, to fetch all Azure AD related data.

- Reader on all subscriptions and/or management groups.

- “Storage Blob Data Contributor” on the storage account to store the results.

Note: All privileges mentioned above can be further reduced with custom roles, but that’s beyond the scope of this blog post. For now, we’re just using the existing roles with least privilege.

Once you’ve assigned these privileges, your container should be ready to roll and produce output.

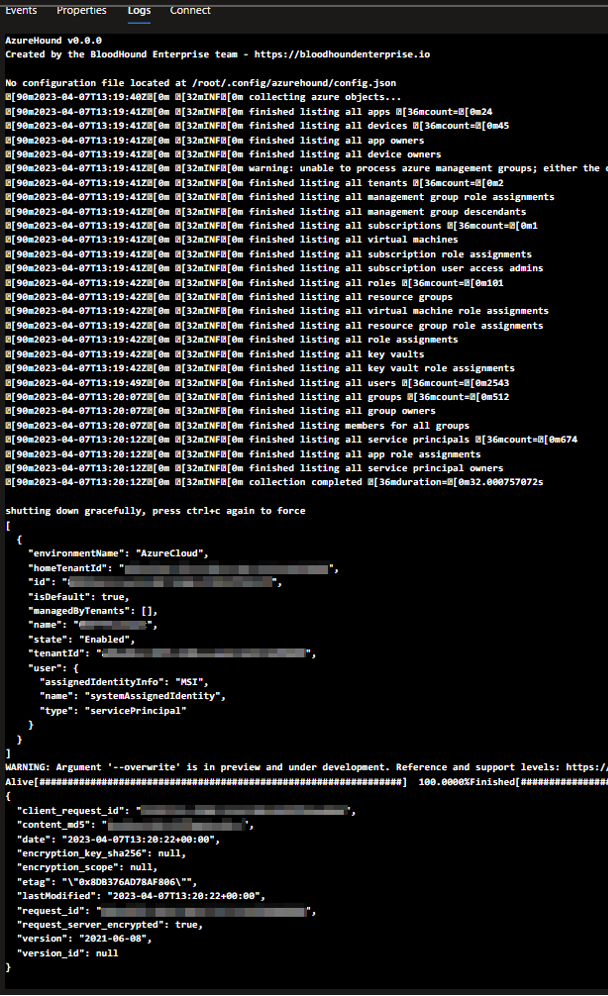

az container start --name azurehound --resource-group {YOUR RG NAME}If everything goes well, the output of your container should look something like this:

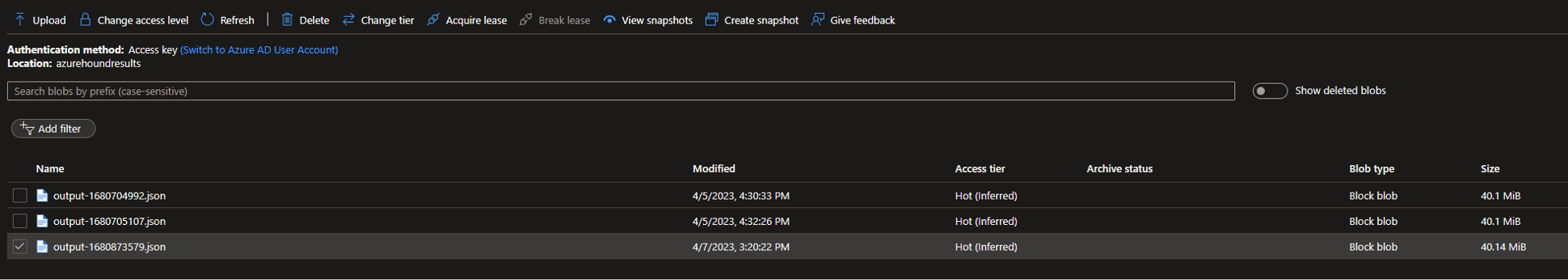

And there should be an output file in your designated blob storage:

The cost of running this container in West-Europe with “Pay as you go” pricing is currently around € 0,21 per hour. Depending on the size of your environment, collection can take anywhere from 5 minutes to 4 hours (and probably more).

Since this blog post is focused on AzureHound, we’re going to assume that you already have the necessary automation in place to ingest your SharpHound data into BloodHound. You can use the same process for ingesting AzureHound data.

Skeleton queries to identify attack paths

Now we have our data ingested, it’s time to extract some useful information from the data. Below is a list of queries I often use to identify misconfigurations. Note that these queries aren’t polished and optimized, but are meant to provide you with insights. You likely need to extend/modify these queries slightly to make them work in your environment. Also, I’m assuming that your accounts follow a specific naming convention, and that you use separate admin accounts for admin purposes. You need to make sure that your naming convention is incorporated into the queries below. So don’t copy-paste the queries below, but rather use them as a starting point to write your own queries to get the insights which are useful for your organization/environment.

Note: For all queries you can add “LIMIT X” if the query takes too long to run.

Find users who have high privileges on a subscription with their normal account

Goal: Find all paths starting from a user that has high/owner privileges on a subscription. Exclude admin accounts.

Option 1: Find all non-admin users that have a path to subscription ownership. Show only the first 1000 paths.

MATCH p = (n:AZUser)-[*]->()-[r:AZOwns]->(g:AZSubscription)

WHERE NOT(tolower(n.userprincipalname) STARTS WITH 'admin_')

RETURN p

LIMIT 1000

Option 2: Find the shortest path for all non-admin users with a path to high privileges on a subscription.

MATCH p = shortestpath((n:AZUser)-[*]->(g:AZSubscription))

WHERE NOT(tolower(n.userprincipalname)

STARTS WITH 'admin_')

RETURN p

Option 3: List all non-admin users, ordered by the number of subscriptions they have high privileges on.

MATCH p = shortestpath((n:AZUser)-[*]->(g:AZSubscription))

WHERE NOT(tolower(n.userprincipalname)

STARTS WITH 'admin_')

RETURN n.userprincipalname,

COUNT(g) ORDER BY COUNT(g) DESC

Find apps with interesting delegated permissions

Goal: Find all Azure apps that have access to resources which might be unexpected, due to nested group memberships.

Option 1: Find all apps that have a path which ends in another resource with a relationship that is not “RunAs” and not “MemberOf”. These relationships can be on the path, but not at the end of the path.

MATCH p = (n:AZApp)-[*]->()-[r]->(g)

WHERE any(r in relationships(p)

WHERE NOT(r:AZRunsAs)) and NOT(r:AZMemberOf)

RETURN p

LIMIT 3000

Option 2: The same as option 1, but for all service principals (not all apps are necessarily a service principal).

MATCH p = (n:AZServicePrincipal)-[*]->()-[r]->(g)

WHERE any(r in relationships(p)

WHERE NOT(r:AZRunsAs)) and NOT(r:AZMemberOf)

RETURN p

LIMIT 3000

Find all VMs with a managed identity that has access to interesting resources

Goal: Find VMs which can have a high impact in case they get compromized.

MATCH p=(v:AZVM)-->(s:AZServicePrincipal)

MATCH q=shortestpath((s)-[*]->(r))

WHERE s <> r

RETURN q

Find shortest path from a compromized device to interesting resources

Goal: Find a path from a compromized Azure-joined device to a resource.

MATCH p = shortestpath((n:AZDevice)-[*]->(g))

WHERE n <> g

RETURN p

LIMIT 100

Find external user with odd permissions on Azure objects

Goal: Find users from an external directory which have odd permissions.

Option 1: Find external users with directly assigned permissions.

MATCH p = (n:AZUser)-[r]->(g)

WHERE n.name contains "#EXT#" AND NOT(r:AZMemberOf)

RETURN n.name, COUNT(g.name), type(r), COLLECT(g.name)

ORDER BY COUNT(g.name) DESC

Option 2: Find external users with Owner or Contributor permissions on a subscription.

MATCH (n:AZUser) WHERE n.name contains "#EXT#"

MATCH p = (n)-[*]->()-[r:AZOwns]->(g:AZSubscription)

RETURN p

Option 3: Find external users with generic high privileges in Azure.

MATCH p = (n:AZUser)-[*]->(g)

WHERE n.name contains "#EXT#" AND any(r in relationships(p) WHERE NOT(r:AZMemberOf))

RETURN p

Find objects with the user administrator role

Goal: Find any object that has indirect access to the user administrator role. Note that you can modify this role in the below queries to any other role you deem relevant.

Option 1: Find all objects with a path to a specific role. In this case “user administrator”.

MATCH p = (n)-[*]->(g:AZRole)

WHERE n<>g and NOT(n:AZTenant) AND g.name starts with "USER ADMINISTRATOR"

RETURN p

Option 2: Find all objects, which are not users, with an indirect role assignment.

MATCH p = shortestpath((n)-[*]->(g:AZRole))

WHERE n<>g and NOT(n:AZTenant) AND NOT(n:AZManagementGroup) and NOT(n:AZUser)

RETURN p

Option 3: The same as option 2, except we exclude directory readers, since this is a common role to have.

MATCH p = shortestpath((n)-[*]->(g:AZRole))

WHERE n<>g and NOT(n:AZTenant) AND NOT(n:AZManagementGroup) and NOT(n:AZUser) and NOT(g.name starts with "DIRECTORY READERS")

RETURN p

Find users with high privileges on most objects

Goal: Identify users with high privileges (owner/contributor) on most objects (non-transitive). Find the top 100 users with most direct contributor permissions. The permission can be changed for AZOwner as well.

MATCH p = (n)-[r:AZContributor]->(g)

RETURN n.name, COUNT(g)

ORDER BY COUNT(g) DESC

LIMIT 100

Closing words

As the possibilities for automation and detection are endless, but this blog post is not, we have skipped on purpose some aspects and details, such as the basics and the analysis of the data. Also, the solution presented here is a DIY solution. If you want to have similar functionality (and more), consider getting BloodHound Enterprise. As for the cypher queries provided, there are way more interesting cypher queries to be thought of. @SadProcessor will release a two-series blog post on identifying paths in BloodHound. You can apply the same concepts which he discusses in his blog posts on the Azure data as well to identify chokepoints. Regularly check our website or our social media channels on Twitter or Linkedin to get notified of these blog posts!

Feel free to reach out with any questions or comments directly through my Twitter @0xffhh or directly through e-mail via [email protected].

Knowledge center

Other articles

FalconFriday: Need for Speed: going underground with near-real-time (NRT) rules – 0xFF26

[dsm_breadcrumbs show_home_icon="off" separator_icon="K||divi||400" admin_label="Supreme Breadcrumbs" _builder_version="4.18.0" _module_preset="default" items_font="||||||||" items_text_color="rgba(255,255,255,0.6)" custom_css_main_element="color:...

How data science can boost your detection engineering maintenance and keep you from herding sheep

[dsm_breadcrumbs show_home_icon="off" separator_icon="K||divi||400" admin_label="Supreme Breadcrumbs" _builder_version="4.18.0" _module_preset="default" items_font="||||||||" items_text_color="rgba(255,255,255,0.6)" custom_css_main_element="color:...

Microsoft Defender for Endpoint Internal 0x06 – Custom Collection

[dsm_breadcrumbs show_home_icon="off" separator_icon="K||divi||400" admin_label="Supreme Breadcrumbs" _builder_version="4.18.0" _module_preset="default" items_font="||||||||" items_text_color="rgba(255,255,255,0.6)" custom_css_main_element="color:...

FalconForce realizes ambitions by working closely with its customers in a methodical manner, improving their security in the digital domain.

Energieweg 3

3542 DZ Utrecht

The Netherlands

FalconForce B.V.

[email protected]

(+31) 85 044 93 34

KVK 76682307

BTW NL860745314B01